Earcon Generator

A web-based tool for designing earcons — short audio cues that signal LLM thinking states like processing, completion, error, and waiting. Built to explore how sonic design can make conversational AI feel more present and legible.

Earcons are the sonic equivalent of icons — brief, distinctive audio signals that communicate state without language. This tool generates earcons specifically for AI assistant interactions: thinking, processing, completion, error, waiting. The question it explores is one I keep returning to: how do you make an intelligent system legible to the people around it?

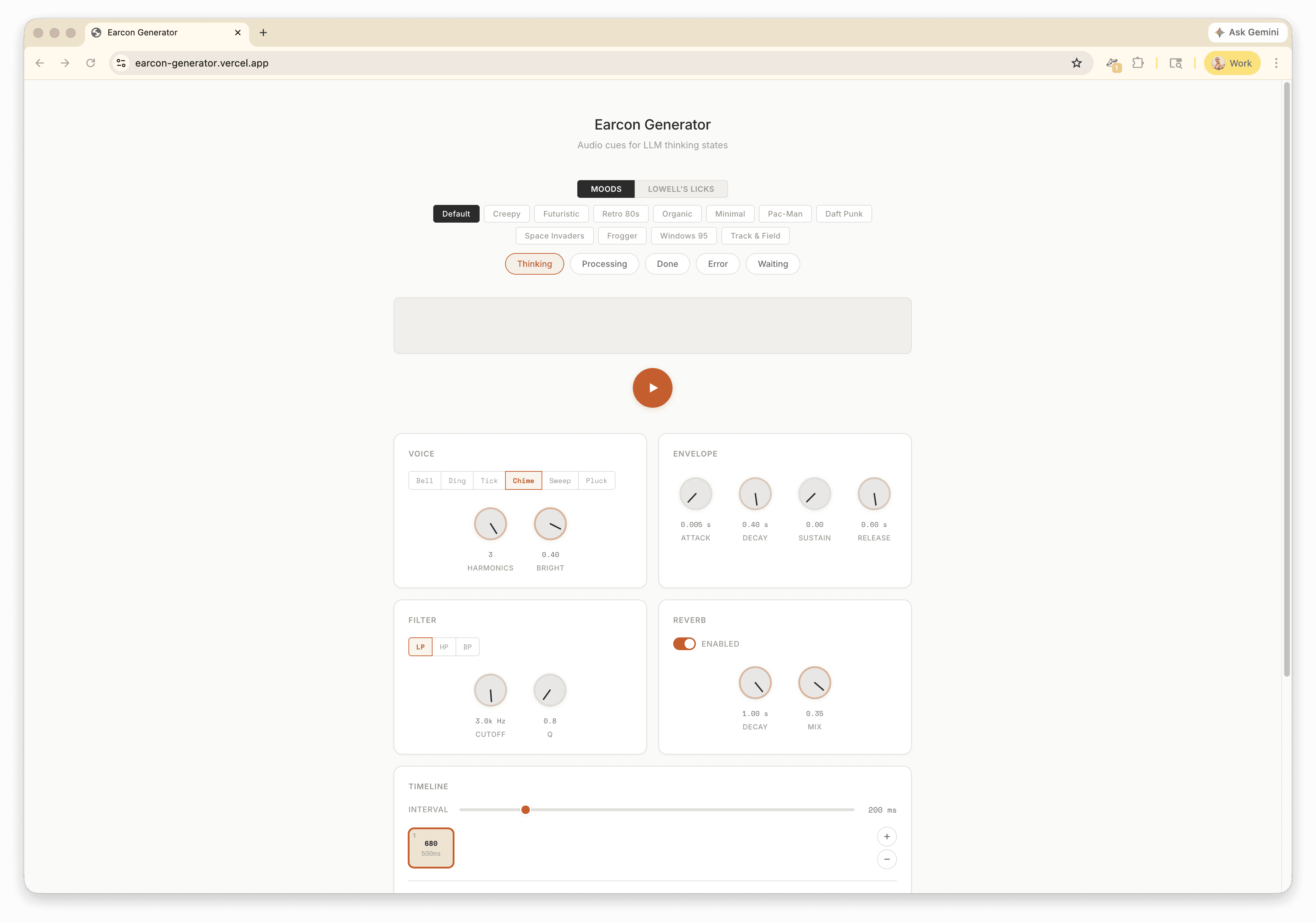

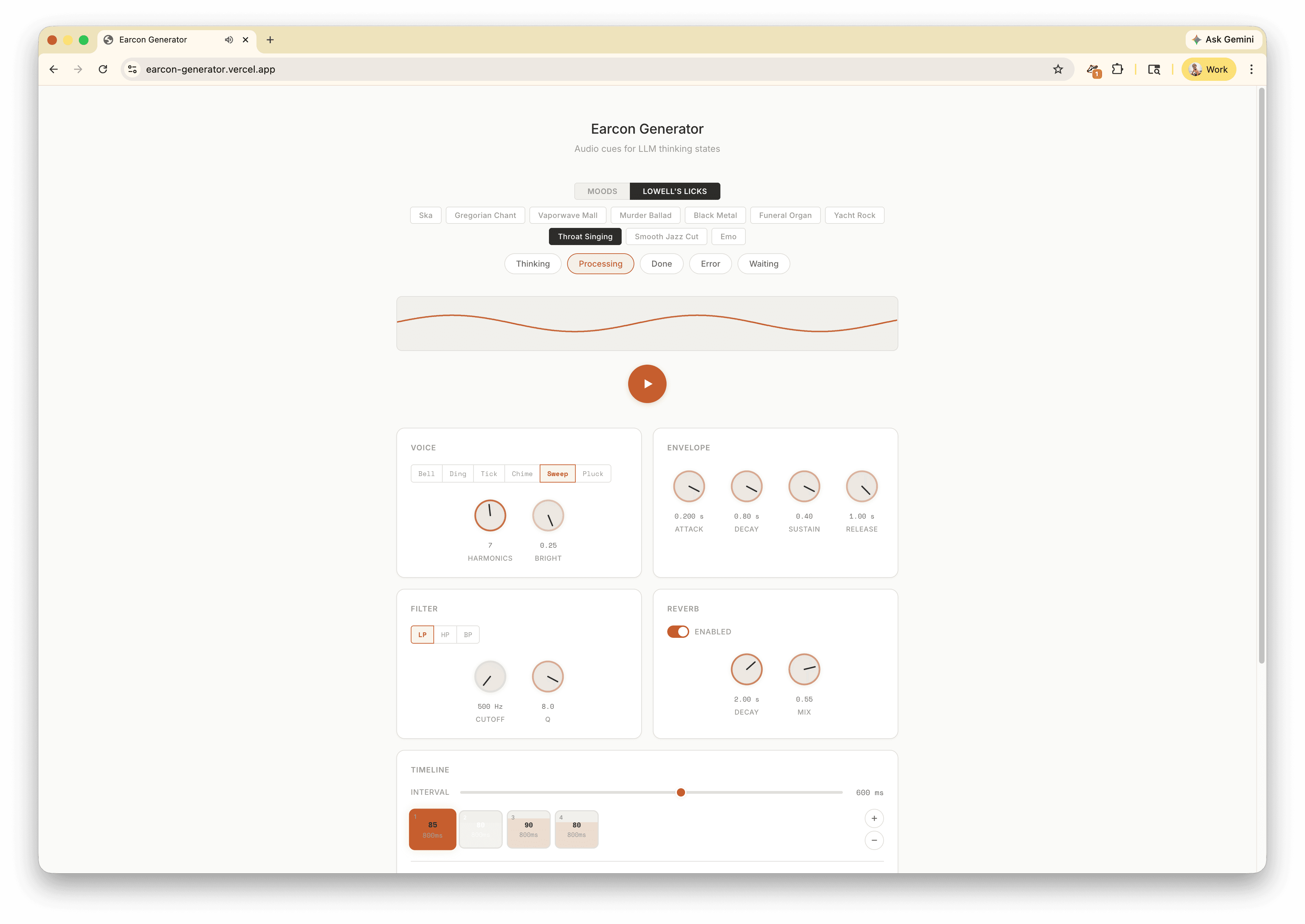

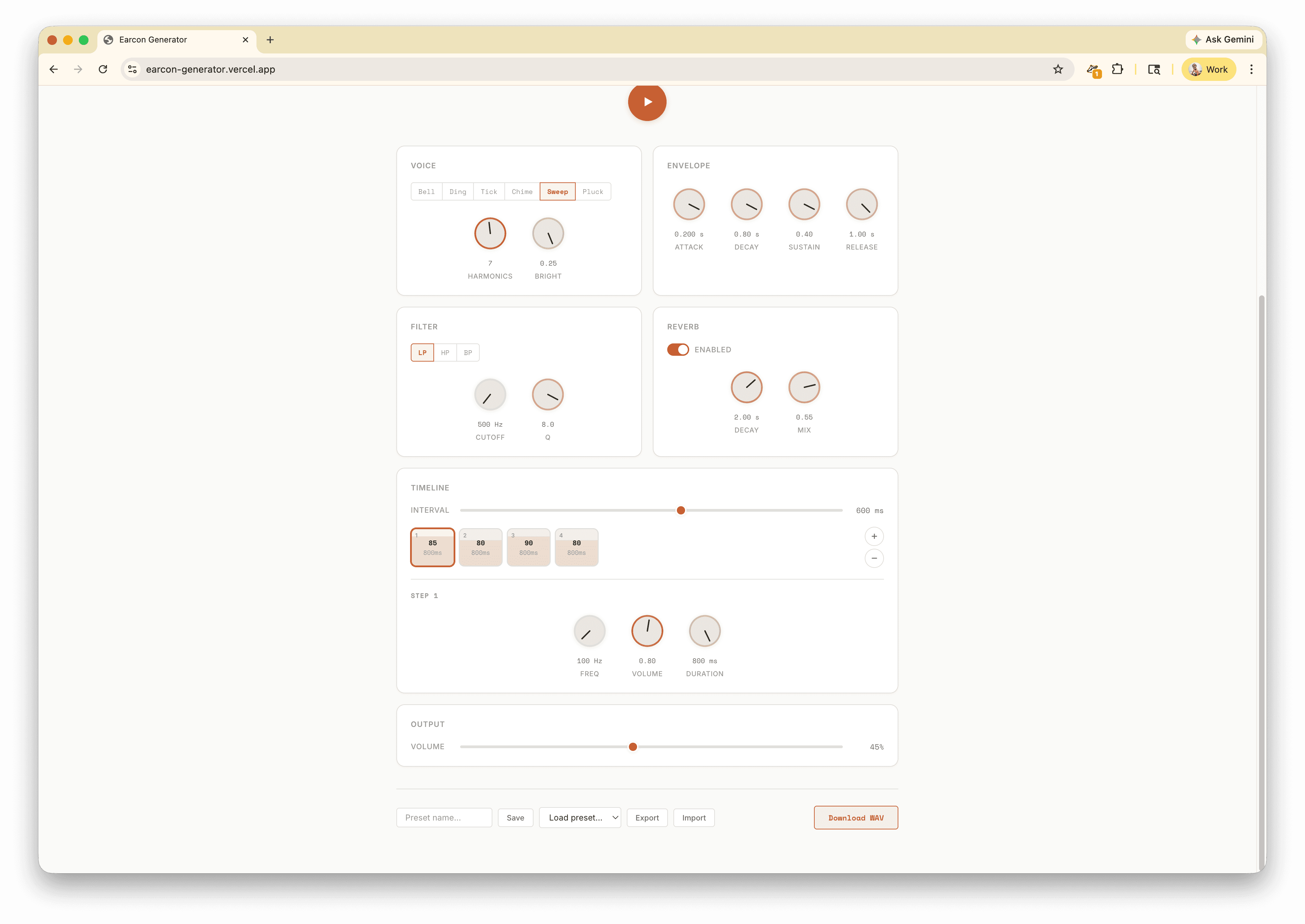

The generator offers multiple sound style presets — from clean chimes to lo-fi "creepy" palettes — with granular control over voice type (bell, ding, chime), envelope shaping, filtering (LP, HP, BP), reverb, and timing. You can audition cues in real time, save presets, and export WAV files for integration into prototypes.

This project is directly connected to thinking about trust in hardware AI. When a wearable device transitions between states — listening, processing, asleep — it needs non-visual signals that are unambiguous and immediate. Earcons carry that trust in a way that UI toggles cannot, because you hear them even when you are not looking. The tool exists to make that sonic vocabulary designable and testable.